A general purpose computer has four main components: the

arithmetic logic unit (ALU), the

control unit, the

memory, and the input and output devices (collectively termed I/O). These parts are interconnected by

busses, often made of groups of

wires.

Inside each of these parts are thousands to trillions of small

electrical circuits which can be turned off or on by means of an

electronic switch. Each circuit represents a

bit (binary digit) of information so that when the circuit is on it represents a "1", and when off it represents a "0" (in positive logic representation). The circuits are arranged in

logic gates so that one or more of the circuits may control the state of one or more of the other circuits.

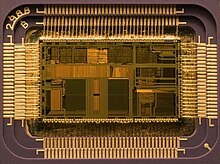

The control unit, ALU, registers, and basic I/O (and often other hardware closely linked with these) are collectively known as a

central processing unit (CPU). Early CPUs were composed of many separate components but since the mid-1970s CPUs have typically been constructed on a single

integrated circuit called a

microprocessor.

Control unit

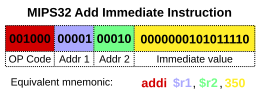

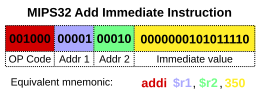

Diagram showing how a particular

MIPS architecture instruction would be decoded by the control system.

The control unit (often called a control system or central controller) manages the computer's various components; it reads and interprets (decodes) the program instructions, transforming them into a series of control signals which activate other parts of the computer.

Control systems in advanced computers may change the order of some instructions so as to improve performance.

A key component common to all CPUs is the

program counter, a special memory cell (a

register) that keeps track of which location in memory the next instruction is to be read from.

The control system's function is as follows—note that this is a simplified description, and some of these steps may be performed concurrently or in a different order depending on the type of CPU:

- Read the code for the next instruction from the cell indicated by the program counter.

- Decode the numerical code for the instruction into a set of commands or signals for each of the other systems.

- Increment the program counter so it points to the next instruction.

- Read whatever data the instruction requires from cells in memory (or perhaps from an input device). The location of this required data is typically stored within the instruction code.

- Provide the necessary data to an ALU or register.

- If the instruction requires an ALU or specialized hardware to complete, instruct the hardware to perform the requested operation.

- Write the result from the ALU back to a memory location or to a register or perhaps an output device.

- Jump back to step (1).

Since the program counter is (conceptually) just another set of memory cells, it can be changed by calculations done in the ALU. Adding 100 to the program counter would cause the next instruction to be read from a place 100 locations further down the program. Instructions that modify the program counter are often known as "jumps" and allow for loops (instructions that are repeated by the computer) and often conditional instruction execution (both examples of

control flow).

It is noticeable that the sequence of operations that the control unit goes through to process an instruction is in itself like a short computer program—and indeed, in some more complex CPU designs, there is another yet smaller computer called a

microsequencer that runs a

microcode program that causes all of these events to happen.

Arithmetic/logic unit (ALU)

The ALU is capable of performing two classes of operations: arithmetic and logic.

The set of arithmetic operations that a particular ALU supports may be limited to adding and subtracting or might include multiplying or dividing,

trigonometry functions (sine, cosine, etc.) and

square roots. Some can only operate on whole numbers (

integers) whilst others use

floating point to represent

real numbers—albeit with limited precision. However, any computer that is capable of performing just the simplest operations can be programmed to break down the more complex operations into simple steps that it can perform. Therefore, any computer can be programmed to perform any arithmetic operation—although it will take more time to do so if its ALU does not directly support the operation. An ALU may also compare numbers and return

boolean truth values (true or false) depending on whether one is equal to, greater than or less than the other ("is 64 greater than 65?").

Logic operations involve

Boolean logic:

AND,

OR,

XOR and

NOT. These can be useful both for creating complicated

conditional statements and processing

boolean logic.

Superscalar computers may contain multiple ALUs so that they can process several instructions at the same time.

Graphics processors and computers with

SIMD and

MIMD features often provide ALUs that can perform arithmetic on

vectors and

matrices.

Memory

Magnetic core memory was the computer memory of choice throughout the 1960s, until it was replaced by semiconductor memory.

A computer's memory can be viewed as a list of cells into which numbers can be placed or read. Each cell has a numbered "address" and can store a single number. The computer can be instructed to "put the number 123 into the cell numbered 1357" or to "add the number that is in cell 1357 to the number that is in cell 2468 and put the answer into cell 1595". The information stored in memory may represent practically anything. Letters, numbers, even computer instructions can be placed into memory with equal ease. Since the CPU does not differentiate between different types of information, it is the software's responsibility to give significance to what the memory sees as nothing but a series of numbers.

In almost all modern computers, each memory cell is set up to store

binary numbers in groups of eight bits (called a

byte). Each byte is able to represent 256 different numbers (2^8 = 256); either from 0 to 255 or −128 to +127. To store larger numbers, several consecutive bytes may be used (typically, two, four or eight). When negative numbers are required, they are usually stored in

two's complement notation. Other arrangements are possible, but are usually not seen outside of specialized applications or historical contexts. A computer can store any kind of information in memory if it can be represented numerically. Modern computers have billions or even trillions of bytes of memory.

The CPU contains a special set of memory cells called

registers that can be read and written to much more rapidly than the main memory area. There are typically between two and one hundred registers depending on the type of CPU. Registers are used for the most frequently needed data items to avoid having to access main memory every time data is needed. As data is constantly being worked on, reducing the need to access main memory (which is often slow compared to the ALU and control units) greatly increases the computer's speed.

Computer main memory comes in two principal varieties:

random-access memory or RAM and

read-only memory or ROM. RAM can be read and written to anytime the CPU commands it, but ROM is pre-loaded with data and software that never changes, so the CPU can only read from it. ROM is typically used to store the computer's initial start-up instructions. In general, the contents of RAM are erased when the power to the computer is turned off, but ROM retains its data indefinitely. In a PC, the ROM contains a specialized program called the

BIOS that orchestrates loading the computer's

operating system from the hard disk drive into RAM whenever the computer is turned on or reset. In

embedded computers, which frequently do not have disk drives, all of the required software may be stored in ROM. Software stored in ROM is often called

firmware, because it is notionally more like hardware than software.

Flash memory blurs the distinction between ROM and RAM, as it retains its data when turned off but is also rewritable. It is typically much slower than conventional ROM and RAM however, so its use is restricted to applications where high speed is unnecessary.

In more sophisticated computers there may be one or more RAM

cache memories which are slower than registers but faster than main memory. Generally computers with this sort of cache are designed to move frequently needed data into the cache automatically, often without the need for any intervention on the programmer's part.

Input/output (I/O)

I/O is the means by which a computer exchanges information with the outside world.

Devices that provide input or output to the computer are called

peripherals.

On a typical

personal computer, peripherals include input devices like the keyboard and

mouse, and output devices such as the

display and

printer.

Hard disk drives,

floppy disk drives and

optical disc drives serve as both input and output devices.

Computer networking is another form of I/O.

Often, I/O devices are complex computers in their own right with their own CPU and memory. A

graphics processing unit might contain fifty or more tiny computers that perform the calculations necessary to display

3D graphics. Modern

desktop computers contain many smaller computers that assist the main CPU in performing I/O.

Multitasking

While a computer may be viewed as running one gigantic program stored in its main memory, in some systems it is necessary to give the appearance of running several programs simultaneously. This is achieved by multitasking i.e. having the computer switch rapidly between running each program in turn.

One means by which this is done is with a special signal called an

interrupt which can periodically cause the computer to stop executing instructions where it was and do something else instead. By remembering where it was executing prior to the interrupt, the computer can return to that task later. If several programs are running "at the same time", then the interrupt generator might be causing several hundred interrupts per second, causing a program switch each time. Since modern computers typically execute instructions several orders of magnitude faster than human perception, it may appear that many programs are running at the same time even though only one is ever executing in any given instant. This method of multitasking is sometimes termed "time-sharing" since each program is allocated a "slice" of time in turn.

Before the era of cheap computers, the principal use for multitasking was to allow many people to share the same computer.

Seemingly, multitasking would cause a computer that is switching between several programs to run more slowly — in direct proportion to the number of programs it is running. However, most programs spend much of their time waiting for slow input/output devices to complete their tasks. If a program is waiting for the user to click on the mouse or press a key on the keyboard, then it will not take a "time slice" until the event it is waiting for has occurred. This frees up time for other programs to execute so that many programs may be run at the same time without unacceptable speed loss.

Multiprocessing

Cray designed many supercomputers that used multiprocessing heavily.

Some computers are designed to distribute their work across several CPUs in a multiprocessing configuration, a technique once employed only in large and powerful machines such as

supercomputers,

mainframe computers and

servers. Multiprocessor and

multi-core (multiple CPUs on a single integrated circuit) personal and laptop computers are now widely available, and are being increasingly used in lower-end markets as a result.

Supercomputers in particular often have highly unique architectures that differ significantly from the basic stored-program architecture and from general purpose computers.

They often feature thousands of CPUs, customized high-speed interconnects, and specialized computing hardware. Such designs tend to be useful only for specialized tasks due to the large scale of program organization required to successfully utilize most of the available resources at once. Supercomputers usually see usage in large-scale

simulation,

graphics rendering, and

cryptography applications, as well as with other so-called "

embarrassingly parallel" tasks.

Networking and the Internet

Computers have been used to coordinate information between multiple locations since the 1950s. The U.S. military's

SAGE system was the first large-scale example of such a system, which led to a number of special-purpose commercial systems like

Sabre.

In the 1970s, computer engineers at research institutions throughout the United States began to link their computers together using telecommunications technology. This effort was funded by ARPA (now

DARPA), and the

computer network that it produced was called the

ARPANET.

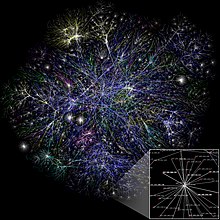

The technologies that made the Arpanet possible spread and evolved.

In time, the network spread beyond academic and military institutions and became known as the

Internet. The emergence of networking involved a redefinition of the nature and boundaries of the computer. Computer operating systems and applications were modified to include the ability to define and access the resources of other computers on the network, such as peripheral devices, stored information, and the like, as extensions of the resources of an individual computer. Initially these facilities were available primarily to people working in high-tech environments, but in the 1990s the spread of applications like

e-mail and the

World Wide Web, combined with the development of cheap, fast networking technologies like

Ethernet and

ADSL saw computer networking become almost ubiquitous. In fact, the number of computers that are networked is growing phenomenally. A very large proportion of

personal computers regularly connect to the

Internet to communicate and receive information. "Wireless" networking, often utilizing

mobile phone networks, has meant networking is becoming increasingly ubiquitous even in mobile computing environments.